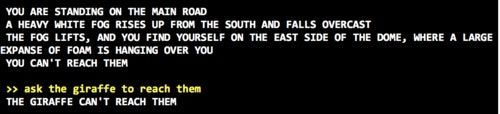

I’ve done several experiments with a text-generating neural network called

GPT-2. Trained at great expense by OpenAI (to the tune of tens of thousands of

dollars worth of computing power), GPT-2 learned to imitate all kinds of text

from the internet. I’ve interacted with the basic model, discovering