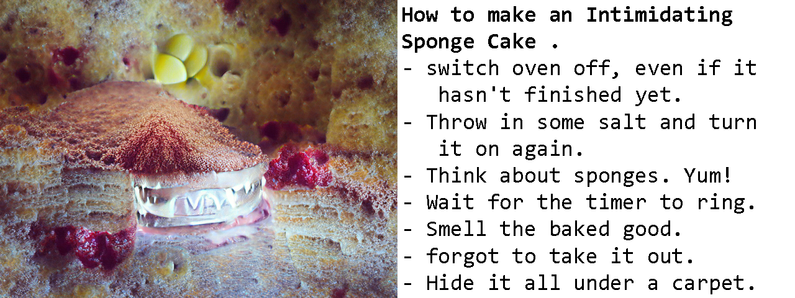

One strange thing I've noticed about otherwise reasonably competent

text-generating neural nets is how their lists tend to go off the rails.

I noticed this first with GPT-2

[https://aiweirdness.com/post/185085792997/gpt-2-it-cant-resist-a-list]. But it

turns out GPT-3 is no exception.

Here's the largest model,