Hey kids! What sound does a woolly horse-sheep make?

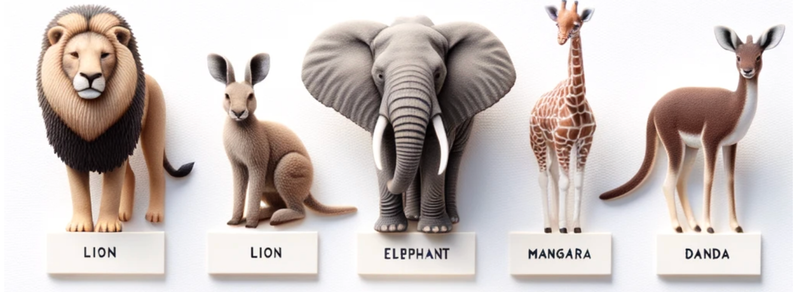

The image above is what you get when you ask dalle-3 (via chatgpt) for some basic educational material: "Please generate an illustrated poster to help children learn which sounds common animals make. Each animal should be pictured with a speech