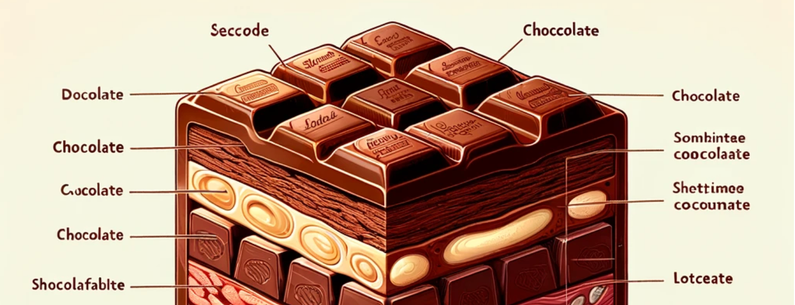

Hey look, it's a guide to basic shapes!

Not only does it have the basic shapes like circle, tringle, hectanbie, and sqale, it also has some of the more advanced shapes like renstqon, hoboz, and flotn!

The fact that even a kindergartener can call out this DALL-E3 generated